But, these two, while doing wonders in terms of automation and flexibility, are slow, and it is very expensive in terms of hardware resources to keep 'em running on a scale, and it requires a lot of work to support it. When your Python or Node.js scraper fails to get data, using Puppeteer or Selenium is usually the most obvious alternative.

Why not just use Puppeteer/Selenium to emulate Chrome? Scraping Chrome extensions might be good when getting started, to do some light scraping, but for heavy duty and better performance you'd better use cloud-based solutions like ScrapeNinja. Is any better than using Chrome extension XYZ? Just copy&paste the code of function to "extractor" field in ScrapeNinja sandbox and then put generated ScrapeNinja code to your local node.js script. scraper.js Running your scraper with extractor in ScrapeNinja: the json data is now located in results variable retrieve your input from node-fetch or file system You rock TJ.// the extractor function can now be called as extract() This dude consistently pumps out high-quality libraries and has always been more than willing to help or answer questions. style, the structure, the open-source"-ness" of this library comes from studying TJ's style and using many of his libraries.Much of cheerio's implementation and documentation is from jQuery. The core API is the best of its class and despite dealing with all the browser inconsistencies the code base is extremely clean and easy to follow. Cheerio would not be possible without his foundational work He completely re-wrote both node-htmlparser and node-soupselect from the ground up, making both of them much faster and more flexible. A special thanks to:įelix has a knack for writing speedy parsing engines. This library stands on the shoulders of some incredible developers. Backersīecome a backer to show your support for Cheerio and help us maintain and improve this open source project. Cheerio in the real worldĪre you using cheerio in production? Add it to the wiki! Sponsorsĭoes your company use Cheerio in production? Please consider sponsoring this project! Your help will allow maintainers to dedicate more time and resources to its development and support. This video shows how easy it is to use cheerio and how much faster cheerio is than JSDOM + jQuery. This video tutorial is a follow-up to Nettut's "How to Scrape Web Pages with Node.js and jQuery", using cheerio instead of JSDOM + jQuery. You can expect them to define the following properties: The "DOM Node" objectĬheerio collections are made up of objects that bear some resemblance to browser-based DOM nodes. If your use case requires any of this functionality, you should consider browser automation software like Puppeteer and Playwright or DOM emulation projects like JSDom. This makes Cheerio much, much faster than other solutions. Specifically, it does not produce a visual rendering, apply CSS, load external resources, or execute JavaScript which is common for a SPA (single page application). It does not interpret the result as a web browser does. Cheerio is not a web browserĬheerio parses markup and provides an API for traversing/manipulating the resulting data structure. Cheerio can parse nearly any HTML or XML document.

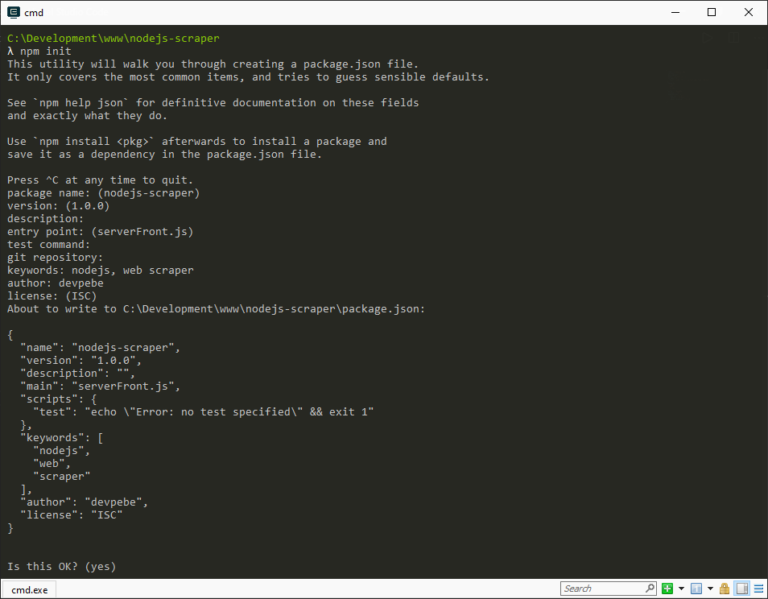

As a result parsing, manipulating, and rendering are incredibly efficient.Ĭheerio wraps around parse5 parser and can optionally use forgiving htmlparser2. Cheerio removes all the DOM inconsistencies and browser cruft from the jQuery library, revealing its truly gorgeous API.Ĭheerio works with a very simple, consistent DOM model. InstallationĬheerio implements a subset of core jQuery. The source code for the last published version, 0.22.0, can be found here. We are currently working on the 1.0.0 release of cheerio on the main branch. Const cheerio = require ( 'cheerio' ) const $ = cheerio.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed